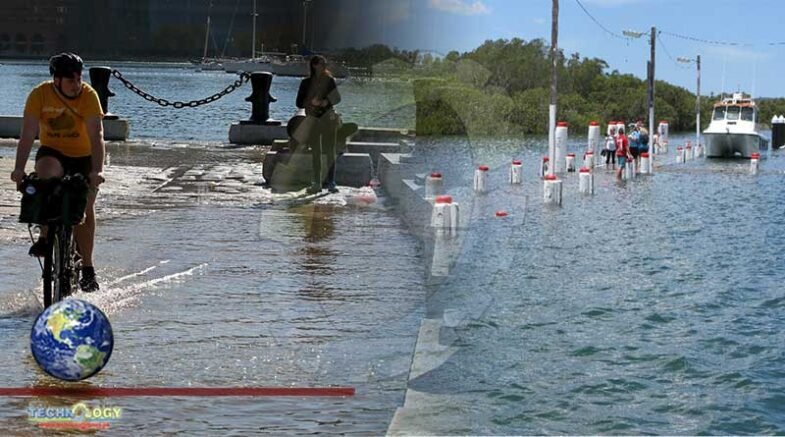

Hundreds of other locals were taking photos of the water and linking them to GPS markers during the year’s highest astronomical tide

Christina Laughlin usually does whatever she can to avoid the flooding that plagues her neighborhood in Norfolk, Virginia, on the Chesapeake Bay. But on a blustery Sunday morning in October 2019, she donned a windbreaker and rain boots, grabbed her battered smartphone and deliberately headed straight to the high-water line.

Like her, hundreds of other locals were out and about that day, busy taking photos of the water and linking them to GPS markers during the year’s highest astronomical tide, known as the “king tide.” Norfolk is one of several eastern US coastal cities with record rates of sea level rise, and scientists hope that the data collected by these citizen scientists can help hone the ability to forecast exactly when and where damaging floods will occur.

Low-lying mid-latitude cities like Norfolk are especially vulnerable, says geographer James Voogt of the University of Western Ontario, one of the authors of a 2020 article in the Annual Review of Environment and Resources on climate events in urban areas. “You’ve got three things operating in the direction that increases the vulnerability of a city to flooding events,” he says: sea level rise, increased chances of severe precipitation events, and an abundance of impervious surfaces that prevent water absorption and encourage runoff.

As early as 2050, climate scientists predict, the average high tide in the Norfolk area will be equal to today’s king tides. But it’s not just the mid-Atlantic region: Many other parts of the world will be increasingly prone to floods that risk lives and property. So understanding and accurately forecasting flood risks tied to extreme weather and rising tide is a key challenge for vulnerable cities around the globe.

If we don’t get the forecast exactly right, we’ll be preparing for a flood in all the wrong places, says forecast scientist David Lavers of the European Centre for Medium-Range Weather Forecasts, an independent research and weather forecasting organization that provides weather data and predictions for 34 European countries.

That’s where Laughlin comes in—and hydrologist Derek Loftis of the Virginia Institute of Marine Science, whom Laughlin and others are assisting. In 2017, Loftis and colleagues started a project called Catch the King that uses a smartphone app to collect the data of citizen scientists during king tides. He’ll use those data to validate and improve his mathematical flooding model, called the Tide Watch, for Norfolk and the surrounding area.

Loftis’s mission is simple: “I want to know where the water goes before it goes there,” he says. But as he and other scientists around the world know, collecting the data needed and then processing them quickly enough to make usable forecasts is anything but easy.

Fathoming the floods

The first step toward building a forecast is a detailed understanding of the current weather situation. “You base your model on how the atmosphere works, and you start with conditions as they are now,” says hydrologist Hannah Cloke of the University of Reading in the UK. If these data aren’t accurate and detailed, she says, the model likely won’t be very good.

Accurate flood forecasts also require an understanding of the situation on the ground: physical factors such as the flow of river water, elevation, soil saturation and land cover. By the early 2000s, supercomputing had advanced enough that hydrologists and geologists were able to integrate weather forecasting models with such measurements. But when Loftis began working on flood forecasting about a decade ago, scientists still didn’t have the fine-grained measurements of water levels that an accurate forecast needs, nor the critically important ability to forecast fast-moving floods in real time.

To address this shortcoming, Loftis developed a forecast model that relied in part on detailed maps from city governments and high-resolution land measurements with LiDAR, which uses pulsed laser beams to create a 3-D map of Earth’s surface. Testing his forecasts against past flooding events, he showed that his calculations were broadly accurate.

But he needed real-life flooding information to fine-tune his models. So in 2017, he and colleagues set up an array of 28 Internet-connected water-level sensors throughout communities in the Norfolk area, similar to systems used in Taiwan and the UK. The new sensors, combined with devices previously installed by the National Oceanic and Atmospheric Administration and the US Geological Survey, relayed rough measurements about water height and movement to a suite of supercomputers at the Virginia institute. Some cities in the area are already using data from the sensors to alert residents to flooding.

Around the world, hydrologists like Loftis are trying to forecast floods, with each region facing its own challenges. Countries with fewer resources still struggle with the kinds of data-paucity problems the US once had. And without good inputs scientists can’t build precise models, says artificial intelligence expert Sella Nevo, lead engineer for the Google Flood Forecasting Initiative, which uses artificial intelligence to estimate when and where floods will occur.

“Knowing that there was a flood in the Ganges, that’s easy,” Nevo says. “But knowing exactly down to 10 meters what areas were wet and what weren’t—that’s a challenge.” And without that level of detail, he says, the forecast won’t help people on the ground.

Like Loftis, Nevo’s team uses data from stream gauges to track current water levels and how they change over time. Their fledgling initiative began by building a flood forecast for India, using more than 1,000 smart stream gauges deployed around the country by the Indian Central Water Commission. Next the team had to build fine-grained 3-D digital elevation maps of the country. Existing elevation models didn’t have enough detail, so the group created their own by performing some mathematical wizardry on available satellite images.

With these two information streams, Nevo and colleagues built an inundation model of what will happen if, say, a river overruns its banks. This inundation model combines physics-based computations and machine learning to produce flood forecasts that can predict water levels within 15 centimeters 90 percent of the time.

But models like this are all theoretical until they are tested with real data. And that’s where projects like Catch the King come in.

Holding back the tide

Loftis designed his flood forecast in order to save lives during hurricanes and other severe weather events that are projected to increase as the climate continues to warm. But he needed a way to test his calculations at moments when lives weren’t on the line.

He got his chance when Dave Mayfield, a retired reporter with the Virginian-Pilot newspaper in Norfolk, approached the Virginia Institute of Marine Science about creating a project to raise awareness about climate change and the consequences of sea level rise in the area. Together, they launched Catch the King in 2017. They assembled a team to design an app called Sea Level Rise that lets users map high water marks with their smartphones.

And then they got the word out through local media. In their inaugural year, more than 700 volunteers turned out to map the king tide—landing the effort in the Guinness Book of World Records as the environmental survey with the most contributions ever—and Loftis used the data to validate his street-level flood forecasting model.

Hundreds of volunteers have shown up for Catch the King each year since—including about 200 this year despite the pandemic—to help Loftis continue to refine his forecasts. “The more data we have, the better we will be at facing the challenge of sea level rise and climate change,” he says. But that’s only a part of the problem solved. Another big challenge for him and other forecasters is to ensure that their high-resolution, detailed forecasts are available with enough notice for residents and governments to take action before flooding begins.

Currently, the Tide Watch provides detailed forecasts up to 36 hours in advance. Ideally, by constantly refining his models, Loftis hopes to make reliable forecasts as much as 72 hours ahead of time. Another flood forecast system developed by researchers at George Mason University provides similar forecasts up to 84 hours in advance for residents in the Washington, DC, area.

Other vulnerable parts of the US want in on the action. “Knowing where it might flood two or three days ahead of time, that would be incredibly valuable,” says Peter Singhofen, CEO of Streamline Technologies, Inc., and an expert on Florida flood models. “We can evacuate people, we can maybe change the way we operate infrastructure like water-pump stations to potentially prevent flooding in some areas or protect property and lives.”

The Google Flood Forecasting Initiative has also made advances. The project has global ambitions, but for now, Nevo’s work has focused on the monsoon-prone Patna region in India’s northeast. Last year, the model provided accurate flood warnings in advance for incoming monsoons and cyclones—a major step forward for the region. In June, Google expanded its forecasts to encompass all of India, and it is beginning work on forecasts for Bangladesh.

Being able to better understand how climate change might affect flood patterns is critical, says climate scientist Megan Kirchmeier-Young of Environment and Climate Change Canada, a government agency promoting sustainability. “As we continue warming, we will see continued increases in the frequency and severity of heavy rainfall over North America,” she says—and that means more flash flooding.

Loftis anticipates that these changes are right around the corner for the Norfolk area. As water levels rise, more streets will become inundated, or even impassable, over longer periods of time. All of this means that creating an accurate flood forecast is a never-ending job as the rising water line of the Atlantic slowly but surely claims increasing swaths of coastal cities.

Originally published at ArsTechnica